Professional Documents

Culture Documents

Bayesian Time Series

Uploaded by

Nguyễn Đăng PhúOriginal Description:

Copyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Bayesian Time Series

Uploaded by

Nguyễn Đăng PhúCopyright:

Available Formats

BAYESIAN TIME SERIES

A (hugely selective) introductory overview - contacting current research frontiers -

Mike West Institute of Statistics & Decision Sciences Duke University

June 5th 2002, Valencia VII - Tenerife

Mike West - ISDS, Duke University

Valencia VII, 2002

Topics

Dynamic linear models (state space models) Sequential context, Bayesian framework Standard classes of models, model decompositions Models and methods in physical science applications Time series decompositions, latent structure Neurophysiology - climatology - speech processing Multivariate time series: Financial applications Latent structure, volatility models

Simulation-Based Computation MCMC Sequential simulation methodology

Mike West - ISDS, Duke University

Valencia VII, 2002

Standard Dynamic Models

Dynamic Linear Models yt = x t + t xt = F t t

Linear State Space Models

t

= Gt t1 + t

signal xt , state vector t = (t1 , . . . , td ) regression vector Ft and state matrix Gt zero mean measurement errors t and state innovations t often zero-mean and normally distributed

Mike West - ISDS, Duke University

Valencia VII, 2002

Examples

Slowly varying level observed with noise: yt = x t + t Dynamic linear regression: yt = x t + t xt = F t t

t

xt = xt1 + t

= t1 + t

Error models for t , t normal distributions mixtures of normals: outliers and abrupt structural changes

Mike West - ISDS, Duke University

Valencia VII, 2002

Simple regression example

yt = x t + t

x t = a t + b t Xt

Ft = (1, Xt ) and t = (at , bt ) wanders through time

2.2 2 1.8 1.6 1.4 1.2 1 0.8 0 1 Region 1 2 3 Region 2 4 5 6 Xt

Mike West - ISDS, Duke University

Valencia VII, 2002

Sales Data Example

190 180 170 160 150 140 130 120 110 100 80 75 70 65 60 55 50 45 1/75 1/77 1/79

MARKET

SALES

1/81

1/83

1/85

Mike West - ISDS, Duke University

Valencia VII, 2002

Sales Data Example

80 Sales 70 60 50 40 30 20 10 0 0 25 50 75 100 125 150 175 200 Market

Mike West - ISDS, Duke University

Valencia VII, 2002

Sales Data Example

RATIO 0.47 0.46 0.45 0.44 0.43 0.42

QTR YEAR

1 75

1 77

1 79

1 81

1 83

1 85

Mike West - ISDS, Duke University

Valencia VII, 2002

Simple Regression Example

Relative to static model, dynamic regression delivers: improved estimation via adaptation for local regression parameters and increased (honest) uncertainty about regression parameters adaptability to (small) changes improved point forecasts partitions variation: parameter vs observation error increased precision of stated forecasts i.e., improved prediction: point forecasts AND precision

Mike West - ISDS, Duke University

Valencia VII, 2002

General Dynamic Linear Model

yt = x t + t yt1 xt1

xt = F t t yt xt

= Gt t1 + t

yt+1 xt+1

t1 t t+1 Sequential model denition : Markov evolution structure CI structure : t sucient for future at time t

Mike West - ISDS, Duke University

Valencia VII, 2002

Bayesian Forecasting

Key concepts: Bayesian: modelling & learning is probabilistic Time-varying parameter models: often non-stationary Sequential view, sequential model denitions encourages interaction, intervention Statistical framework: Forecasting: What might happen? and What if?

Data processing and statistical learning from observations Updating of models and probabilistic summaries of belief Time series analysis ... Retrospection: What happened?

Mike West - ISDS, Duke University

Valencia VII, 2002

Bayesian Machinery

Inferences based on information Dt = {(y1 , . . . , yt ), It } Find and summarise p(t |Dt ) and p(1 , . . . , t |Dt ) and update as t t + 1 Forecasts: p(yt+1 , . . . , yt+k |Dt ) and update as t t + 1 Implementation & computations: Linear/normal models: neat theory, Kalman ltering Extend to infer variance components, non-normal errors .. need approximations, simulation methods, MCMC

Mike West - ISDS, Duke University

Valencia VII, 2002

Commercial Applications

Short term forecasting of consumer sales and demand Monitoring: stocks and inventories of consumer products Many items or sectors: Aggregation and multi-level models Standard models for commercial applications: Data = Trend + Seasonal + Regression + Error yt = x1t + x2t + x3t + t

Mike West - ISDS, Duke University

Valencia VII, 2002

Models in Commercial Applications

Component signals xjt follow individual dynamic models Trends: e.g., locally linear trend x1t = x1,t1 + t + x1t , Seasonals: x2t =

j

t = t1 + t

(aj,t cos(2 j/p) + bj,t sin(2 j/p))

where (aj,t , bj,t ) wander through time Key concept: Model (De)Composition Modelling, prior specication, interventions: component-wise Posterior inference: detrending, deseasonalisation, etc

Mike West - ISDS, Duke University

Valencia VII, 2002

Special Classes of DLMs

Time Series DLMs constant F, G yt = x t + t xt = F t

t

= Gt1 + t

Includes all standard point-forecasting methods (exponential smoothing, variants, ... ) Polynomial trend and seasonal components in commercial models Includes all practically useful ARIMA models Multiple representations:

t

= Et G EGE1

General class: Time-varying Ft , Gt Includes non-stationary models, time-varying ARIMA models, etc., ...

Mike West - ISDS, Duke University

Valencia VII, 2002

Simulation-Based Computation

yt = x t + t

xt = F t t

= Gt t1 + t

Normal error models p(t ), p(t ) Fixed time window t = 1, . . . , n State vector set: = {1 , . . . , n } Require: full posterior sample from p(|Dn ) Available via Forward-ltering: Backward-sampling algorithm

Carter and Kohn (1994) Biometrika Fr hwirth-Schnatter (1994) J Time Series Anal u West and Harrison 1997

Mike West - ISDS, Duke University

Valencia VII, 2002

FFBS Algorithm

Forward-ltering: Standard normal/linear analysis: Kalman lter delivers normal p(t |Dt ) at each t = 1, . . . , n Backward-sampling: at t = n : sample from p(n |Dn ) n for t = n 1, n 2, . . . , 1 : sample from normal distribution t p(t |Dt , ) t+1 Builds up as , , . . . n n1 Exploits Markovian/CI model structure

Mike West - ISDS, Duke University

Valencia VII, 2002

MCMC in DLMs

DLM parameters: e.g., constant model yt = x t + t xt = F t Parameters: Variances (variance matrices) of t , t Elements of F, G Indicators in normal mixture models for errors Add parameters to analysis: MCMC utilising FFBS

= Gt1 + t

Mike West - ISDS, Duke University

Valencia VII, 2002

MCMC in DLMs

Parameters (may depend on sample size) Gibbs sampling: p(, |Dn ) iteratively resampled via Apply FFBS algorithm to draw from p(| , Dn ) Draw new value of from p(| , Dn ) Iterate Standard Gibbs sampling: MCMC May need creativity in sampling : Metropolis-Hastings, etc Often easy: as in Autoregressive DLM

Mike West - ISDS, Duke University

Valencia VII, 2002

MCMC: Autoregressive Model Example

Data: State AR(d) DLM for for xt : 1 1 G= 0 . . . 0

y t = x t + t xt =

d j=1

j xtj +

xt = (1, 0, . . . , 0)xt , 2 0 1 0 d1 0 0 .. . 0 1 3 0 0

xt = Gxt1 + t d 0 0 , . . . 0

t

t

0 0 . . . 0

Parameters: {1 , . . . , d ; V (t ), V ( t )} Conditional posteriors standard: linear regression parameters

Mike West - ISDS, Duke University

Valencia VII, 2002

Models in Scientic Applications

Historical interest in biomedical monitoring (change-points), engineering applications (control, tracking), environmental monitoring, ... Goals: Exploratory discovery of interpretable latent processes Nonstationary time series: hidden quasi-periodicities Changes over time at dierent time scales Time:frequency structure (in time domain) State-space models: Stationary and/or nonstationary, time-varying parameters General decomposition theory for state space-space models DLM autoregressions and time-varying autoregressions

Mike West - ISDS, Duke University

Valencia VII, 2002

Autoregressive DLM

Latent AR(d) process: Time series: DLM for for xt : 1 1 G= 0 . . . 0

xt =

d j=1

j xtj +

yt = x0t + xt + t xt = (1, 0, . . . , 0)xt , 2 0 1 0 d1 0 0 ... 0 1 3 0 0

with trend, etc in x0t xt = Gxt1 + t d 0 0 , . . . 0

t

t

0 0 . . . 0

xt latent, unobserved G = G() with = (1 , . . . , d ) to be estimated

Mike West - ISDS, Duke University

Valencia VII, 2002

Time Series Decomposition

Eigenstructure: G = E1 AE Reparametrise to diagonal model: xt Ext Transforms to

dz da

xt =

j=1

zt,j +

j=1

at,j

z terms: one for each pair of complex conjugate eigenvalues a terms: one for each real eigenvalue underlying latent processes zt,j and at,j follow simple models at,j is AR(1) process - short-term correlations zt,j is quasi-periodic ARMA(2,1) - noisy sine wave with randomly time-varying amplitude & phase, xed frequency

Mike West - ISDS, Duke University

Valencia VII, 2002

Time-varying Autoregression

TV-AR(d) model: xt =

d j=1

t,j xtj +

AR parameter: t = (t,1 , . . . , t,d ) wanders through time:

t

= t1 + t

stochastic shocks t Innovations:

t 2 2 N (0, t ) time-varying variance t

Flexible representations: non-stationary process, time-varying spectral properties latent component structure other evolutions for t (e.g., Godsill et al on speech processing)

Mike West - ISDS, Duke University

Valencia VII, 2002

DLM Form of TV-AR(d):

xt = (1, 0, . . . , 0) xt xt = G(t )xt1 + ( t , 0, . . . , 0)

t

= t1 + t t,2 0 1 0 t,d1 0 0 .. . 0 1 t,d 0 0 . . . 0

with

t,1 1 G(t ) = 0 . . . 0

Analysis: Posterior distributions for {t , t :

t} t}

Component models: yt = x0t + x1t + t infer latent TV-AR processes too: {x0t , xt :

Mike West - ISDS, Duke University

Valencia VII, 2002

TVAR Decomposition

dz da

xt =

j=1

zt,j +

j=1

at,j

z terms: one for each pair of complex conjugate eigenvalues a terms: one for each real eigenvalue underlying latent processes: at,j is TV-AR(1) process - short-term correlations + time-varying correlation zt,j is TV-ARMA(2,1) - noisy sine wave with randomly time-varying amplitude & phase + time-varying frequency (2/wavelength) Number of complex/real eigenvalues can vary over time too!

Mike West - ISDS, Duke University

Valencia VII, 2002

Time:Frequency Analysis

Fit exible (high order?) AR or TV-AR models Estimate latent components and their frequencies, amplitudes over time Time domain representation of spectral structure Often, some zt,j physically meaningful, some (high frequency) represent noise, model approximation at,j noise, model approximation and and (possibly) low frequency trend

Mike West - ISDS, Duke University

Valencia VII, 2002

Paleoclimatology Example

deep ocean cores: relative abundance of 18 O 18 O as global temperatures (smaller ice mass) reverse sign: higher recent global temperatures well known periodicities: earth orbital dynamics impact on solar insolation Milankovitch; Shackleton et al since 1976 eccentricity: obliquity: precession: 95-120 kyear 40-42 kyear 19-25 kyear (1 or 2?)

Mike West - ISDS, Duke University

Valencia VII, 2002

Oxygen Isotope Data

Form of time variation in individual cycles ? Timing/nature of onset of ice-age cycle eccentricity component 1000 kyears ago ? Time scale: errors, interpolation, ... measurement, sampling error, etc Models: High order TV-AR, p = 20, plus smooth trend (outliers?) Variance components estimated: changing AR parameters Decomposition: Posterior mean of xt , t,j at each t 4 dominant quasi-periodic components: order by estimated amplitude (innovation variance) Others: residual structure &/or contaminations

Mike West - ISDS, Duke University

Valencia VII, 2002

oxygen isotope series

5.0 4.5 4.0 3.5 3.0 0 500 1000 1500 2000 2500

oxygen level

reversed time in k years

trajectories of time-varying periods of components

150 period 100 50 0 0 500 1000 1500 2000 2500

reversed time in k years

Mike West - ISDS, Duke University

Valencia VII, 2002

trend

comp 1

comp 2

comp 3

comp 4

500

1000

1500

2000

2500

reversed time in k years

Mike West - ISDS, Duke University

Valencia VII, 2002

Oxygen

Wavelengths vary only modestly Estimated periods/wavelengths consistent with geological determinations .... 108120, peak 110 .... 40.841.6, peak 41.5 .... 22.223, peak 22.8 Switch due to order of estimated amplitude Geological interpretation? Structural climate change 1.1m yrs?

Mike West - ISDS, Duke University

Valencia VII, 2002

Example: EEG Study

Clinical uses of electroconvulsive therapy Measure seizure treatment outcomes via long, multiple EEG (electroencephalogram) series electrical potential uctuations on scalp Many multiple series: one seizure 19 channels, 256/sec Models to: Characterise seizure waveforms ... time varying amplitudes at ranges of frequencies (alpha waves, etc) Superimposed on normal waveform, noise, ... Identify/extract latent components: infer seizure eects Spatial connectivities: related multiple series

Mike West - ISDS, Duke University

Valencia VII, 2002

Why TVAR Models?

200 100 100

0 50 100 time 150 200

-100

-200

-300

-300

0

-200

-100

200

50

100 time

150

200

-300 -200 -100

0

100

200

500

1000 time

1500

2000

Mike West - ISDS, Duke University

Valencia VII, 2002

EEG Decomposition Examples

beta

beta

alpha

alpha:theta

theta

delta

data

100

200 time

300

400

500

Central section of Ictal19-Cz

Mike West - ISDS, Duke University

Valencia VII, 2002

beta

alpha

theta2

theta1

delta2

delta1

data

500

1000

1500 time

2000

2500

3000

S26.Low Patient/Treatment

Mike West - ISDS, Duke University

Valencia VII, 2002

EEG Treatment Comparison

Two EEG series on one individual: S26.Low cf S26.Mod Repeat seizures with varying ECT treatment

2 TVAR(20) with time-varying t

Evident high frequency structure and spiky traces higher order models Several frequency bands inuenced by seizure several components Identication issues

Mike West - ISDS, Duke University

Valencia VII, 2002

EEG Comparisons

10 S26.Low S26.Mod

- theta range delta range

0 0 1000 2000 time 3000 4000

S26.Low vs. S26.Med: Low frequency trajectories

Mike West - ISDS, Duke University

Valencia VII, 2002

Sequential Simulation Analysis

Time t : States t = {tk , . . . , t } of interest Summarised via set of posterior samples: p(t |Dt ) Time t + 1 : Observe yt p(yt |t ) Require updated summary, posterior samples: p(t+1 |Dt ) Issues: Expanding/Changing state space and dimension Simulation-based summaries: discrete approximations Inference on parameters as well as state vectors New data may conict with prior/predictions

Mike West - ISDS, Duke University

Valencia VII, 2002

Particle Filtering

Key Goal: sequentially update posteriors p(t |Dt ) p(t+1 |Dt+1 ) Numerical Approximations (points/weights): {t , t : j = 1, . . . , Nt } Theoretical update: p(t+1 |Dt+1 ) p(yt+1 |t+1 )p(t+1 |Dt ) MC approximation to prior:

Nt (j) (j)

p(t+1 |Dt )

k=1

t p(t+1 |t )

(k)

(k)

Mixture prior: sample and accept/reject ideas natural

Mike West - ISDS, Duke University

Valencia VII, 2002

Example: Auxilliary Particle Filter

APF state update from t t + 1: for each k, estimates t+1 = E(t+1 |t ) and weights gt+1 t p(yt+1 |t+1 ) sample (aux) indicators j with probs gt+1 time t + 1 samples: t+1 p(t+1 |t ) and weights: t+1 =

(j) (j) (j) (j) (k) (k) (k) (k) (k)

p(yt+1 |t+1 ) p(yt+1 |t+1 )

(j)

(j)

Mike West - ISDS, Duke University

Valencia VII, 2002

Multivariate Models in Finance

(Quintana et al invited talk, Valencia VII) Futures markets, exchange rates, portfolio selection Multiple time series: time-varying covariance patterns Econometric/dynamic regressions/hierarchical models Latent factors in hierarchical, dynamic models Common time-varying structure in multiple series Bayesian multivariate stochastic volatility

yt = (y1t , . . . , ypt ) e.g., yit is pvector of returns on investment i (exchange rate futures, etc.)

Mike West - ISDS, Duke University

Valencia VII, 2002

Dynamic Factor Models

Exchange rate modelling for dynamic asset allocation Monthly (daily) currency exchange rates Dynamic regression/econometric predictors Residual structure and residual stochastic volatility Time-varying variances and covariances Dynamic factor models Dynamic asset allocation & risk management: portfolio studies Bayesian analysis: model tting, sequential analysis, forecasting, decision analysis

Mike West - ISDS, Duke University

Valencia VII, 2002

Excess Returns on Exchange Rates (monthly)

DEM

10 10

JPY

Returns % 0

-10

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

-10 7602

Returns % 0

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

GBP

10 10

AUD

Returns % 0

-10

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

-10 7602

Returns % 0

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

CAD

10 10

CHF

Returns % 0

-10

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

-10 7602

Returns % 0

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

FRF

10 10

NLG

Returns % 0

-10

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

-10 7602

Returns % 0

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

Mike West - ISDS, Duke University

Valencia VII, 2002

Short Interest Rates (monthly)

Short Interest Rate

DEM

0.10 0.10

JPY

IS 0.0

-0.10

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

-0.10

7602

IS 0.0

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

GBP

0.10 0.10

AUD

IS 0.0

-0.10

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

-0.10

7602

IS 0.0

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

CAD

0.10 0.10

CHF

IS 0.0

-0.10

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

-0.10

7602

IS 0.0

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

FRF

0.10 0.10

NLG

IS 0.0

-0.10

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

-0.10

7602

IS 0.0

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

Mike West - ISDS, Duke University

Valencia VII, 2002

Stochastic Volatility Factor Models

yt = dynamic regressiont + residualt residualt N (0, t )

residualt = Xt ft + et ft et ft ... kvector of latent factors N (ft |0, Ft ) N (et |0, Et ) et ... qvector of idiosyncracies

Ft = diag(exp(1,t ), . . . , exp(k,t )) Et = diag(exp(k+1,t ), . . . , exp(k+q,t )) t = Xt Ft Xt + Et Factor and idiosyncratic latent log volatilities: t = (1,t , . . . , k+q,t )

Mike West - ISDS, Duke University

Valencia VII, 2002

Dynamic Factor Model: Factor Loadings Structure

Constant factor loadings matrix Xt = X or slowly varying over time Identication constraints on X :

xk,1 x k+1,1 . . .

1 x2,1 . . .

0 1 . . . xk,2 xk+1,2 . . . xq,2

0 0 . . . xk,3 xk+1,3 . . . xq,3

X=

xq,1

0 1 xk+1,k . . .

0 0

xq,k

Series order denes interpretation of factors

Mike West - ISDS, Duke University

Valencia VII, 2002

Stochastic Volatility Models: Factors & Idiosyncracies

Multivariate SV models vector AR(1) model for latent log volatilities t volatility persistence: AR(1) coecients (diagonal) marginal volatility levels: time-varying t dependent innovations: shocks to volatilities related across series global/sector eects represented through correlations t = t + (t1 t1 ) + t t N (0, U) t random walk

Mike West - ISDS, Duke University

Valencia VII, 2002

Dynamic Regressions and Shrinkage Models

several predictors (e.g., interest rates) regression coecients time-varying shrinkage models: coecients related across series e.g., sensitivity to short-term interest rates similar across currencies Series j, time t : jt = jt j,t1 + (1 jt )t + innovationjt global or sector average t time-varying degrees of shrinkage jt multiple series, several predictors: state-space model formulations

Mike West - ISDS, Duke University

Valencia VII, 2002

Model Fitting & Analysis: MCMC

Inference based on a xed sample over t = 1, . . . , T Bayesian analysis via posterior simulations Monte Carlo samples from joint posterior for model parameters, dynamic regression parameters, AND latent processes: factors & volatilities { ft , t : t = 1, . . . , T } examples of highly structured, hierarchical models with many latent variables and parameters custom MCMC built of standard modules simple MC evaluation of forecast/predictive distributions

Mike West - ISDS, Duke University

Valencia VII, 2002

Model Fitting & Analysis: Sequential Analyses

Sequential particle ltering over t = T + 1, T + 2, , . . . particulate approximation to posterior distributions prior: cloud of particles, associated importance weights posterior: sampling/importance resampling to revise posterior samples p(|Dt1 ) p(|Dt ) sample regeneration by local smoothing of particulate prior time-varying latent variables and model parameters

Mike West - ISDS, Duke University

Valencia VII, 2002

Volatilities (SD) of Currency Returns (monthly)

DEM

0.15 0.15

JPY

stdev 0.10

0.05

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

0.0

7602

0.05

stdev 0.10

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

GBP

0.15 0.15

AUD

stdev 0.10

0.05

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

0.0

7602

0.05

stdev 0.10

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

CAD

0.15 0.15

CHF

stdev 0.10

0.05

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

0.0

7602

0.05

stdev 0.10

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

FRF

0.15 0.15

NLG

stdev 0.10

0.05

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

0.0

7602

0.05

stdev 0.10

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

Mike West - ISDS, Duke University

Valencia VII, 2002

2 Latent Factors and their Volatilities (SD) (monthly)

DEM

0.10 Factor 1 0.0 0.05 0.12

7602 8102 8602 9102 9602

-0.10

0.0

0.04

7602

Factor 1 0.08

8102

8602

9102

9602

JPY

0.10 Factor 2 0.0 0.05 0.12

7602 8102 8602 9102 9602

-0.10

0.0

0.04

7602

Factor 2 0.08

8102

8602

9102

9602

Mike West - ISDS, Duke University

Valencia VII, 2002

3 Latent Factors and their Volatilities (SD) (monthly)

DEM

0.10 0.08

7602 7710 7906 8102 8210 8406 8602 8710 8906 9102 9210 9406 9602 9710

0.05

Factor 1 0.0

-0.05

-0.10

0.0

7602

0.02

Factor 1 0.04

0.06

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

JPY

0.10 0.08

7602 7710 7906 8102 8210 8406 8602 8710 8906 9102 9210 9406 9602 9710

0.05

Factor 2 0.0

-0.05

-0.10

0.0

7602

0.02

Factor 2 0.04

0.06

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

GBP

0.10 0.08

7602 7710 7906 8102 8210 8406 8602 8710 8906 9102 9210 9406 9602 9710

0.05

Factor 3 0.0

-0.05

-0.10

0.0

7602

0.02

Factor 3 0.04

0.06

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

Mike West - ISDS, Duke University

Valencia VII, 2002

Factor Loading Matrix X (monthly)

Factor 1 DEM 1.00 (0.00) JPY GBP AUD CAD CHF FRF ESP 0.55 (0.06) 0.68 (0.05) 0.23 (0.03) 0.04 (0.02) 1.04 (0.03)

Factor 2 0.00 (0.00) 1.00 (0.00) 0.48 (0.14) 0.35 (0.07) 0.03 (0.08) 0.23 (0.07)

0.96 (0.01) -0.01 (0.02) 0.99 (0.01) -0.01 (0.01)

Mike West - ISDS, Duke University

Valencia VII, 2002

Factor Loading Matrix X (monthly)

Factor 1 DEM FRF ESP CHF GBP JPY AUD CAD 1 1 1 1 0.7 0.5 0.2

Factor 2 0.2 0.5 1 0.3

Mike West - ISDS, Duke University

Valencia VII, 2002

Idiosyncratic Volatilities (SD) (monthly)

DEM

Idiosyncratic 0.05 0.10 0.15 Idiosyncratic 0.05 0.10 0.15

JPY

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

GBP

Idiosyncratic 0.05 0.10 0.15 Idiosyncratic 0.05 0.10 0.15

AUD

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

CAD

Idiosyncratic 0.05 0.10 0.15 Idiosyncratic 0.05 0.10 0.15

CHF

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

FRF

Idiosyncratic 0.05 0.10 0.15 Idiosyncratic 0.05 0.10 0.15

NLG

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

0.0

7602

7710

7906

8102

8210

8406

8602

8710

8906

9102

9210

9406

9602

9710

Mike West - ISDS, Duke University

Valencia VII, 2002

Financial Time Series

Models/forecasts feed into portfolio decision management Quintana et al invited talk, Valencia VII Live/Operational assessments of dynamic factor models Improved sequential particle ltering: parameters Time-varying loadings matrices Xt , parameters Uncertainty about number of factors

Mike West - ISDS, Duke University

Valencia VII, 2002

Bayesian Time Series, Currently

Applied aspects: Financial modelling and forecasting Natural/engineering sciences: signal processing Spatial time series: epidemiology, environment, ecology Models and methods: Highly structured multiple time series Spatial time series Computational methods: Sequential simulation methods

Mike West - ISDS, Duke University

Valencia VII, 2002

Links & Materials

Books and papers: www.isds.duke.edu/mw Copious references to broad literatures 1997 Tutorial extensive and historical at website Teaching materials, notes, from past time series courses Software: www.isds.duke.edu/mw TVAR (matlab, fortran), AR component models, BATS, links Encylopedia of Statistical Sciences (1998): Bayesian Forecasting (eds: S. Kotz, C.B. Read, and D.L. Banks), Wiley. 1997 2nd Edition: West & Harrison/Springer book Bayesian Forecasting & Dynamic Models Aguilar, Prado, Huerta & West (1999), Valencia 6 invited paper

Mike West - ISDS, Duke University

Valencia VII, 2002

Key Recent (since 1995) & Current Authors

many/most are here!

Omar Aguilar (Lehman Bros) Sid Chib (Washington Univ/St Louis) Simon Godsill & Co (Cambridge) Genshiro Kitagawa (ISM Tokyo) Jane Liu (UBS NY) Viridiana Lourdes (ITAM Mexico) Michael Pitt (Warwick) Raquel Prado (Santa Cruz) Peter Rossi (Chicago) Chris Carter (Hong Kong) Sylvia Frhwirth-Schnatter (Vienna) u Gabriel Huerta (Univ New Mexico) Robert Kohn (Sydney) Hedibert Lopes (Rio) Giovanni Petris (Univ Arkansas) Nicolas Polson (Chicago) Jos M Quintana (Nikko NY) e Neil Shephard (Oxford)

Mike West - ISDS, Duke University

Valencia VII, 2002

... and, of course, ...

Valencia VII Invited Papers Quintana, Lourdes, Aguilar & Liu Global gambling: multivariate nancial time series Davy & Godsill Signal processing, latent structure, MCMC ... plus a number of contributed talks and posters

You might also like

- Introduction To (Demand) ForecastingDocument39 pagesIntroduction To (Demand) Forecastinggeeths207No ratings yet

- ECONOMETRICDocument50 pagesECONOMETRICjeanpier dcostaNo ratings yet

- Regime-Switching Models: Department of Economics and Nuffield College, University of OxfordDocument50 pagesRegime-Switching Models: Department of Economics and Nuffield College, University of OxfordeconométricaNo ratings yet

- Regime-Switching Models: Department of Economics and Nuffield College, University of OxfordDocument50 pagesRegime-Switching Models: Department of Economics and Nuffield College, University of OxfordeconométricaNo ratings yet

- For Simulation (Study The System Output For A Given Input)Document28 pagesFor Simulation (Study The System Output For A Given Input)productforeverNo ratings yet

- Bayesian Dynamic Modelling: Bayesian Theory and ApplicationsDocument27 pagesBayesian Dynamic Modelling: Bayesian Theory and ApplicationsRyan TeehanNo ratings yet

- Functional Data Analysis With PACE: Kehui ChenDocument37 pagesFunctional Data Analysis With PACE: Kehui ChenAkshay SinghNo ratings yet

- Real Time Physics Class NotesDocument57 pagesReal Time Physics Class Notesy5t9ftfg42No ratings yet

- CFD Simulation of Mono Disperse Droplet Generation by Means of Jet Break-UpDocument20 pagesCFD Simulation of Mono Disperse Droplet Generation by Means of Jet Break-UpZeSnexNo ratings yet

- STATA Training Session 3Document53 pagesSTATA Training Session 3brook denisonNo ratings yet

- Building A Good Vol SurfaceDocument49 pagesBuilding A Good Vol SurfaceFabio ZenNo ratings yet

- Markovian Projection For Equity, Fixed Income, and Credit DynamicsDocument31 pagesMarkovian Projection For Equity, Fixed Income, and Credit DynamicsginovainmonaNo ratings yet

- 12 3 2010 Mitchel SlidesDocument79 pages12 3 2010 Mitchel SlidesHector GarciaNo ratings yet

- Dynamic Matrix-Variate Graphical ModelsDocument30 pagesDynamic Matrix-Variate Graphical ModelsHamzehNo ratings yet

- Zivot - Lectures On Structural Change PDFDocument14 pagesZivot - Lectures On Structural Change PDFPär SjölanderNo ratings yet

- Neural Network Time SeriesDocument42 pagesNeural Network Time SeriesboynaduaNo ratings yet

- Introduction To Computational Fluid Dynamics: Dmitri KuzminDocument34 pagesIntroduction To Computational Fluid Dynamics: Dmitri KuzminmanigandanNo ratings yet

- Introduction To Computational Fluid Dynamics: Dmitri KuzminDocument34 pagesIntroduction To Computational Fluid Dynamics: Dmitri Kuzminmanigandan100% (1)

- CfdpreDocument354 pagesCfdpreHüseyin Cihan KurtuluşNo ratings yet

- Multivariate Singular Spectrum Analysis: Andreas GrothDocument46 pagesMultivariate Singular Spectrum Analysis: Andreas GrothrickyisfandiariNo ratings yet

- Sequential Calibration of OptionsDocument15 pagesSequential Calibration of Optionshzd199623333No ratings yet

- peterl/teaching/DM: E C I IDocument8 pagespeterl/teaching/DM: E C I IVenkatesh VuruthalluNo ratings yet

- Examples of Time SeriesDocument50 pagesExamples of Time SeriesSam SkywalkerNo ratings yet

- Factor Models For Portfolio Selection in Large Dimensions: The Good, The Better and The UglyDocument28 pagesFactor Models For Portfolio Selection in Large Dimensions: The Good, The Better and The Ugly6doitNo ratings yet

- Higgs DiscoveryDocument74 pagesHiggs DiscoveryAndy HoNo ratings yet

- Modeling ResearchDocument26 pagesModeling ResearchErwin “Eru” WidodoNo ratings yet

- Fluid DynamicsDocument44 pagesFluid DynamicsSunmoon Al-HaddabiNo ratings yet

- Article 2d VMD EmmcvprDocument13 pagesArticle 2d VMD EmmcvprJean-ChristopheCexusNo ratings yet

- Time Inhomogeneous Multiple Volatility Modelling: Wolfgang H Ardle Helmut HERWARTZ Vladimir SPOKOINYDocument43 pagesTime Inhomogeneous Multiple Volatility Modelling: Wolfgang H Ardle Helmut HERWARTZ Vladimir SPOKOINYScott TreloarNo ratings yet

- Econ21 DitzenDocument36 pagesEcon21 Ditzenfbn2377No ratings yet

- Applied Eco No MetricsDocument75 pagesApplied Eco No MetricsPeach PeaceNo ratings yet

- Overview of Interest Rate ModelingDocument33 pagesOverview of Interest Rate ModelingcubanninjaNo ratings yet

- Krolzig Macroeconometrics IDocument48 pagesKrolzig Macroeconometrics INaresh SrikakolapuNo ratings yet

- Week 5 NotesDocument35 pagesWeek 5 NotesBill CarlsonNo ratings yet

- 3201 W1 & 2 - MRP Forecasting From TS 20100722Document37 pages3201 W1 & 2 - MRP Forecasting From TS 20100722Tony TangNo ratings yet

- Cascade Model SlidesDocument36 pagesCascade Model SlidesNguyen Duc ThienNo ratings yet

- CFD NotesDocument17 pagesCFD NotesManuel TholathNo ratings yet

- Nonlinear Programming PDFDocument224 pagesNonlinear Programming PDFLina Angarita HerreraNo ratings yet

- Time Series Analysis: Applied Econometrics Prof. Dr. Simone MaxandDocument124 pagesTime Series Analysis: Applied Econometrics Prof. Dr. Simone MaxandAmandaNo ratings yet

- Mpaalb Icann16Document8 pagesMpaalb Icann16fbxurumelaNo ratings yet

- MULTISCALE PROCESS MONITORING WITH SINGULAR SPECTRU - 2007 - IFAC Proceedings VoDocument6 pagesMULTISCALE PROCESS MONITORING WITH SINGULAR SPECTRU - 2007 - IFAC Proceedings VosmeykelNo ratings yet

- Lecture 3 Numerical MethodsDocument61 pagesLecture 3 Numerical MethodsLeandro Di BartoloNo ratings yet

- Nonlinear Programming Concepts PDFDocument224 pagesNonlinear Programming Concepts PDFAshoka VanjareNo ratings yet

- Time Series AnalysisDocument9 pagesTime Series Analysisbhetuwalamrit17No ratings yet

- Fault Detection For Switched Systems Based On A Deterministic MethodDocument6 pagesFault Detection For Switched Systems Based On A Deterministic MethodFatiha HAMDINo ratings yet

- Bayesian Dynamic Modelling: Bayesian Theory and ApplicationsDocument29 pagesBayesian Dynamic Modelling: Bayesian Theory and ApplicationsmuralidharanNo ratings yet

- Applied Econometrics - : Introduction To Time SeriesDocument26 pagesApplied Econometrics - : Introduction To Time SeriesramboriNo ratings yet

- Assisted History Matching and Optimization: Remus HaneaDocument51 pagesAssisted History Matching and Optimization: Remus HaneashowravNo ratings yet

- Nelder Mead SlidesDocument47 pagesNelder Mead SlidesDaniel HurtadoNo ratings yet

- Stata Lab4 2023Document36 pagesStata Lab4 2023Aadhav JayarajNo ratings yet

- Robust Control: Lecture Notes in Control and Information Sciences January 1993Document15 pagesRobust Control: Lecture Notes in Control and Information Sciences January 1993Isai SanchezNo ratings yet

- Time Series AnalysisDocument173 pagesTime Series AnalysisMiningBooksNo ratings yet

- Dimension Reduction Techniques: For Efficiently Computing Distances in Massive DataDocument47 pagesDimension Reduction Techniques: For Efficiently Computing Distances in Massive DataSalman HameedNo ratings yet

- SlidesDocument209 pagesSlidesNavaneeth Krishnan BNo ratings yet

- Sensitivity Analysis and Experimental Design: - Case Study of An NF-B Signal PathwayDocument24 pagesSensitivity Analysis and Experimental Design: - Case Study of An NF-B Signal PathwayAnna BelenciucNo ratings yet

- Sayadi FuzzyClusteringDocument61 pagesSayadi FuzzyClusteringMoJan1371No ratings yet

- Green's Function Estimates for Lattice Schrödinger Operators and Applications. (AM-158)From EverandGreen's Function Estimates for Lattice Schrödinger Operators and Applications. (AM-158)No ratings yet

- Machine Intelligence and Pattern RecognitionFrom EverandMachine Intelligence and Pattern RecognitionRating: 5 out of 5 stars5/5 (2)

- Introduction to the Mathematics of Inversion in Remote Sensing and Indirect MeasurementsFrom EverandIntroduction to the Mathematics of Inversion in Remote Sensing and Indirect MeasurementsNo ratings yet

- EViews 4.1 UpdateDocument54 pagesEViews 4.1 UpdateLuiza BogneaNo ratings yet

- Statistical Digital Signal Processing and Modeling: Monson H. HayesDocument6 pagesStatistical Digital Signal Processing and Modeling: Monson H. HayesDebajyoti DattaNo ratings yet

- Beck and Katz 2011Document28 pagesBeck and Katz 2011Tamas GodoNo ratings yet

- Ashwath Thesis PDFDocument90 pagesAshwath Thesis PDFAnuj MehtaNo ratings yet

- 151-Texto Del Artículo-2348-1-10-20180906 PDFDocument32 pages151-Texto Del Artículo-2348-1-10-20180906 PDFdacsilNo ratings yet

- IFoA Syllabus 2019-2017Document201 pagesIFoA Syllabus 2019-2017hvikashNo ratings yet

- M. Phil. in Statistics: SyllabusDocument12 pagesM. Phil. in Statistics: Syllabussivaranjani sivaranjaniNo ratings yet

- The Use of Triple Exponential Smoothing MethodDocument5 pagesThe Use of Triple Exponential Smoothing MethodRenjun GanNo ratings yet

- Human Brain Mapping - 2005 - Patel - A Bayesian Approach To Determining Connectivity of The Human BrainDocument10 pagesHuman Brain Mapping - 2005 - Patel - A Bayesian Approach To Determining Connectivity of The Human BrainKerem YücesanNo ratings yet

- Test Bank Questions Chapter 5Document5 pagesTest Bank Questions Chapter 5Anonymous 8ooQmMoNs1No ratings yet

- Borges Et Al (2018)Document10 pagesBorges Et Al (2018)Edjane FreitasNo ratings yet

- Chapter 16: Time-Series ForecastingDocument48 pagesChapter 16: Time-Series ForecastingMohamed MedNo ratings yet

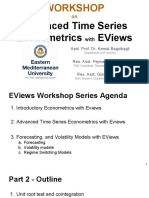

- Workshop 4 - Part 2 - Advanced Time Series Econometrics With EViewsDocument99 pagesWorkshop 4 - Part 2 - Advanced Time Series Econometrics With EViewsLyana Zainol100% (2)

- Vibration CriteriaDocument24 pagesVibration CriteriaDennis Espinoza100% (1)

- Research Paper Full Final Ryan Diedricks 20105521Document47 pagesResearch Paper Full Final Ryan Diedricks 20105521Ryan DiedricksNo ratings yet

- 9.-Jordi Gali - El Modelo Neokeynesiano)Document62 pages9.-Jordi Gali - El Modelo Neokeynesiano)VÍCTOR ALONSO ZAMBRANO ORÉNo ratings yet

- Exponential SmmothingDocument30 pagesExponential SmmothingJorge Alberto Beristain PerezNo ratings yet

- Module A StatisticsDocument36 pagesModule A Statisticsnageswara kuchipudiNo ratings yet

- Bayesian Dynamic Modelling: Bayesian Theory and ApplicationsDocument29 pagesBayesian Dynamic Modelling: Bayesian Theory and ApplicationsmuralidharanNo ratings yet

- Pendugaan Model Peramalan Harga Cabai Merah - OKE2Document12 pagesPendugaan Model Peramalan Harga Cabai Merah - OKE2Miftahul JanahNo ratings yet

- A Machine Learning Forecasting Model For COVID-19 Pandemic in IndiaDocument14 pagesA Machine Learning Forecasting Model For COVID-19 Pandemic in IndiaLuckysingh NegiNo ratings yet

- (TS) Time SeriesDocument819 pages(TS) Time SeriesMedBook DokanNo ratings yet

- Econometery ch2Document38 pagesEconometery ch2Abel AbelNo ratings yet

- An Advanced Sales Forecasting Using Machine Learning AlgorithmDocument4 pagesAn Advanced Sales Forecasting Using Machine Learning AlgorithmInternational Journal of Innovative Science and Research TechnologyNo ratings yet

- Lecture Notes Part 1Document79 pagesLecture Notes Part 1sumNo ratings yet

- Test For Stationarity and StablityDocument79 pagesTest For Stationarity and StablityTise TegyNo ratings yet

- LectDocument96 pagesLectCarmen OrazzoNo ratings yet

- Moanassar,+8986 26608 1 LEDocument13 pagesMoanassar,+8986 26608 1 LESnehal singhNo ratings yet

- MFM 5053 Chapter 1Document26 pagesMFM 5053 Chapter 1NivanthaNo ratings yet

- Aalbers 2022Document21 pagesAalbers 2022Lidia Altamura GarcíaNo ratings yet